Introduction

The healthcare context is characterized by a high degree of complexity, involving a broad number and variety of medical disciplines networked for prevention, diagnosis, therapy and follow-up of human pathologies. Despite the eager efforts of the healthcare personnel, sometimes things might (and actually do) go wrong, thus producing unintentional harm (eventually serious) to the patients. As such, patient safety must be considered one of leading healthcare challenges.

Some foremost studies in the field of patients safety, reviewed in the eminent Editorial “Reducing errors in medicine” published by Donald M. Berwick and Lucian L. Leape in the British Medical Journal in 1999 (1), highlighted that serious or potentially serious medical errors can occur in the care of 6.7 out of every 100 patients, in 3.7% of hospital admissions, over half of which would have been preventable and 13.6% of which might lead to death. It was also estimated that medical errors cost the U.S. $17–29 billion a year. Remarkably, approximately 2.2 million US hospital patients experience adverse drug reactions (ADRs) to prescribed medications each year (2). These concerning esteems are strongly supported by various data collected over the past 20 years. In 1995, the U.S. federal Centers for Disease Control and Prevention (CDC) assessed the number of unnecessary antibiotics prescribed annually for viral infections to be 20 million. Moreover, approximately 7.5 million unnecessary medical and surgical procedures were performed annually in the US, while approximately 8.9 million Americans were hospitalized unnecessarily (3). According to various sources, the overall estimated annual mortality and economic cost of improper medical intervention is predictably much higher, approaching 783,936 and $282 billion respectively (106,000 deaths and $12 billion for ADRs, 98,000 and $2 billion for generic medical errors, 115,000 deaths and $55 billion for bedsores, 88,000 deaths and $5 billion for hospital-acquired infections, 37,136 deaths and $122 billion for unnecessary procedures, 32,000 deaths and $9 billion for surgery-related complications) (3). It is therefore noticeable that the American healthcare system might be itself the leading cause of death and injury, and the estimated 10-year total of 7.8 million iatrogenic deaths are predictably higher than all the casualties from all the wars fought by the US throughout its entire history.

The notorious document “To Err is Human”, published by the US Institute of Medicine (IOM) in 1999, reported that as many as 98,000 people die each year needlessly due to preventable medical harm, the equivalent of a national disaster every week of every year (4), three jumbo-jet crashes every 2 days, and largely overcoming the death rate due from motor vehicle accidents, breast cancer, or AIDS, which are the three causes that receive far more public attention (5). Shortly afterward, these data began to marshal considerable public and professional sentiment. President Clinton suddenly embraced them and promoted an effort to address the problem with the Quality Interagency Coordination Task Force. The IOM also recognized the urgent need to establish firm actions to intervene, in the attempt to reduce this sorrowful number of preventable harms, whereas the U.S. Congress allocated $50 million to the Agency for Healthcare Research and Quality (AHRQ) of the U.S. Department of Health & Human Services for patient safety research grants in the budget of the year 2001. Despite this initial flurry of activity, progress slowed once the media moved on to the next crisis and, as such, a further document was released by the IOM in late 2001, entitled “Crossing the Quality Chasm: A New Health Care System for the 21st Century”(6). This report renewed the urgent call for fundamental change to close the quality gap in healthcare, also recommending a sweeping redesign of the U.S. healthcare system and providing overarching principles for specific direction for policymakers, healthcare leaders, clinicians, regulators, purchasers, and others. In this volume, the steering committee presented a set of performance expectations for the 21st century health care system, a set of 10 new rules to guide patient-clinician relationships, a suggested organizing framework to better align the incentives inherent in payment and accountability with improvements in quality, as well as the key steps to promote evidence-based practice and strengthen clinical information systems. In May 2004, the World Health Organization (WHO) also recognized the magnitude of the problem and supported the creation of an international alliance named “World Alliance for Patient Safety”, to facilitate the development of patient safety policy and practice in all Member States, to act as a major force for international improvement. The WHO’s World Alliance for Patient Safety work was supported by a growing number of partnerships with safety agencies, technical experts, patient groups and many other stakeholders from around the world who should help to drive the patient safety agenda forward. One of the leading issues was the development of an International Classification for Patient Safety (ICPS), which is intended to harmonize the description of patient safety incidents into a common (standardized) language, allow systematic collection of information about patient safety incidents (both adverse eventsand near misses) from a variety of sources and allow statistical analysis, learning and resource prioritization aimed to harmonize the description of patient safety incidents (7).

Despite the notable focus placed on the issue of patient safety the Consumers Union has recently released a report, which was symbolically entitled “To Err is Human – To Delay is Deadly”. The heading is somehow frustrating, asserting that “Ten years later, a million lives lost, billions of dollars wasted” (8). This expert, independent, nonprofit U.S. organization whose mission is to work for a fair, just, and safe marketplace for all consumers and to empower consumers to protect themselves, highlighted that it is still unclear whether any real progress has been made in this field, and efforts to reduce the harm caused by the medical care system were few and fragmented. With little transparency and no public reporting (except where hard fought state laws required public reporting of hospital infections), scarce data are not in support of any real progress. It was in fact reported that preventable medical harm still accounts for more than 100,000 deaths each year – a million lives over the past decade – and medication errors in hospitals alone still cost $3.5 billion a year. Moreover, based on paper chart reviews and billing records, it is also estimated that patient safety declined by 1 percent in each of the six years following the IOM report so that, according to these concerning data, the U.S. healthcare context is supposed to be less safe than in 1999 (9). Some key issues were brought to attest the failure of the safety policy of the healthcare system, including evidence that:

1. few hospitals have adopted well-known systems to prevent medication errors and the U.S. Food & Drug Administration (FDA) rarely intervenes;

2. a national system of accountability through transparency as recommended by the IOM has not been created;

3. no national entity has been empowered to coordinate and track patient safety improvements; and

4. doctors and other health professionals are not expected to demonstrate competency.

It was thereby concluded that this unjustified medical harm is as yet unacceptable, demanding urgent and determined actions from the healthcare system that might include further prevention of medication errors, creation of accountability through transparency (i.e., identification and learning from preventable medical harm through both mandatory and voluntary reporting systems), establishment of a national focus to track progress, increase of the standards for improvements and establishment of major competency in patient safety for doctors, nurses and healthcare organizations.

To further boost the establishment of a culture of safety in healthcare, in 2006 the former U.S. President George Bush signed the Deficit Reduction Act (DRA), requiring the Secretary to identify conditions that are:

a) high cost or high volume or both;

b) result in the assignment of a case to a Diagnosis Related Group (DRG) that has a higher payment when present as a secondary diagnosis; and

c) could reasonably have been prevented through the application of evidence-based guidelines.

For discharges occurred after October 1, 2008, hospitals have no longer received additional payment for cases which had been identified by the National Quality Forum in which one of the selected conditions was not present on admission (object inadvertently left in after surgery, air embolism, blood incompatibility, catheter associated urinary tract infection, pressure ulcer (decubitus ulcer), vascular catheter associated infection, surgical site infection-mediastinitis (infection in the chest) after coronaryartery bypass graft surgery and certain types of falls and traumas). In 2009 additional conditions were included (i.e., surgical site infections following certain elective procedures, including certain orthopedic surgeries, and bariatric surgery for obesity, certain manifestations of poor control of blood sugar levels, deep vein thrombosis or pulmonary embolism following total knee replacement and hip replacement procedures) (10). By adopting this restrictive policy, the Centers for Medicare and Medicaid Services estimate the federal government will realize savings of $60 million per year, beginning in 2012. The UK government is now starting a similar embargo inasmuch as the UK National Healthcare System (NHS) operating framework for 2010-11 has set important changes, with payment increases to hospitals only available by improving quality. Beginning from April 2010, primary care trusts will not pay if treatment results in one of the seven listed “never events” (i.e., wrong site surgery, retained instrument after an operation, wrong route of administration of chemotherapy, misplaced nasogastric or orogastric tube not detected beforeuse, inpatient suicide by use of non-collapsible rails, in-hospital maternal death from postpartum haemorrhage after elective caesarean section, and intravenous administration of miss-selected concentrated potassium chloride) (11). The clinical conditions for which both the U.S. Medicare and the UK NHS will cease to pay are obviously preventable, as are the vast majority of laboratory errors. In the predictable scenario that national healthcare systems may generalize the principle of refusal to pay for poor-quality care beyond these initial and predictably symbolic national initiatives, laboratory professionals will be encouraged to place more focus on the best possible quality and clinical value of laboratory diagnostics (12).

Taxonomy of patient safety

Quality is the degree to which health services for individuals and populations increase the likelihood of desired health outcomes and are consistent with current professional knowledge. Patient safety is commonly considered as the reduction of risk of unnecessary harm associated with healthcare to an acceptable minimum, which encompasses the collective notions of given current knowledge, resources available and the context in which care is delivered weighed against the risk of non-treatment or other treatment. A patient safety practice is therefore a type of process or structure whose application reduces the probability of adverse events resulting from exposure to the healthcare system across a range of diseases and procedures. Healthcare-associated harm is any harm arising from or associated with plans or actions taken during the provision of healthcare, rather than an underlying disease or injury. A patient safety incident is an event or circumstance that could have resulted, or did result, in unnecessary harm to a patient, thus meaning impairment of structure or function of the body and/or any deleterious effect arising there from (i.e., disease, injury, suffering, disability and death) (13).

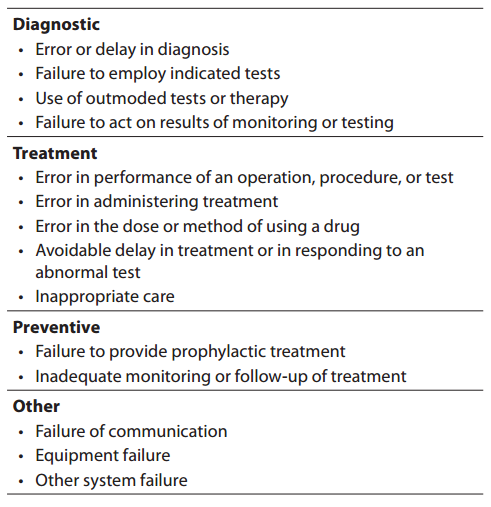

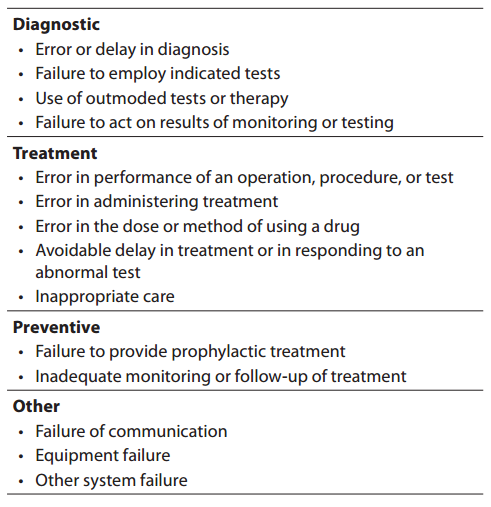

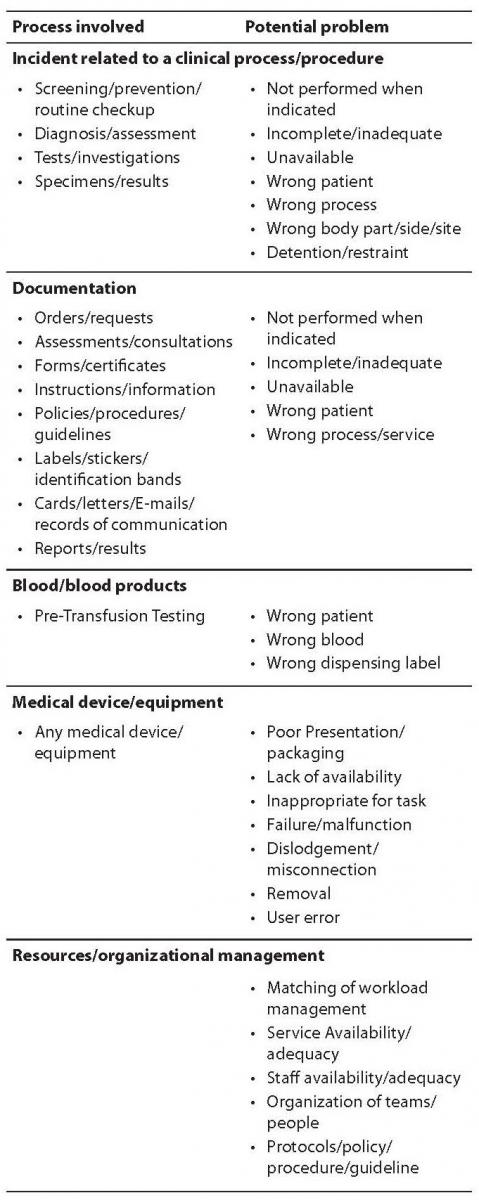

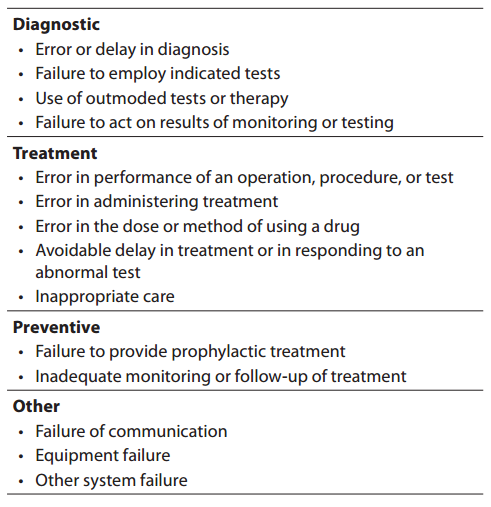

The definitions of medical error are still subjected to debate, as there are many types (from minor to major), and the causality is often undetermined while being usually attributed to a variety of factors such as human vulnerability, medical complexity and system failures. Although a medical error is technically portrayed as an adverse event or near miss that is preventable with the current state of medical knowledge (14), more conventionally medical errors are referred to as an incorrect clinical diagnosis, a mishandled therapeutic procedure or, globally, as the result of a flawed clinical decision making. On the other hand, a medical mistake has also been defined as a commission or an omission with potentially negative consequences for the patient that would have been judged wrong by skilled and knowledgeable peers at the time it occurred, independent of whether there were any negative consequences (15). The IOM also defined an error as the failure of a planned action to be completed as intended (i.e., error of execution) or the use of a wrong plan to achieve an aim (4). Lucina L. Leape defines an error as an “unintended act (either omission or commission) or an act that does not achieve its intended outcome” (5), while James Reason defines it as a “failure of a planned sequence of mental or physical activities to achieve its intended outcome when these failures cannot be attributed to chance” (16). There is however a major area of agreement in all these definitions that is the obvious exclusion of the natural history of disease that does not respond to treatment as well as the foreseeable complications of a correctly performed medical procedure from the adverse outcome occurred. According to the IOM, medical errors can be classified according to four major categories according to the clinical path, that are “diagnostic”, “treatment”, “prevention” and “others” (Table 1).

Table 1. Classification of medical errors.

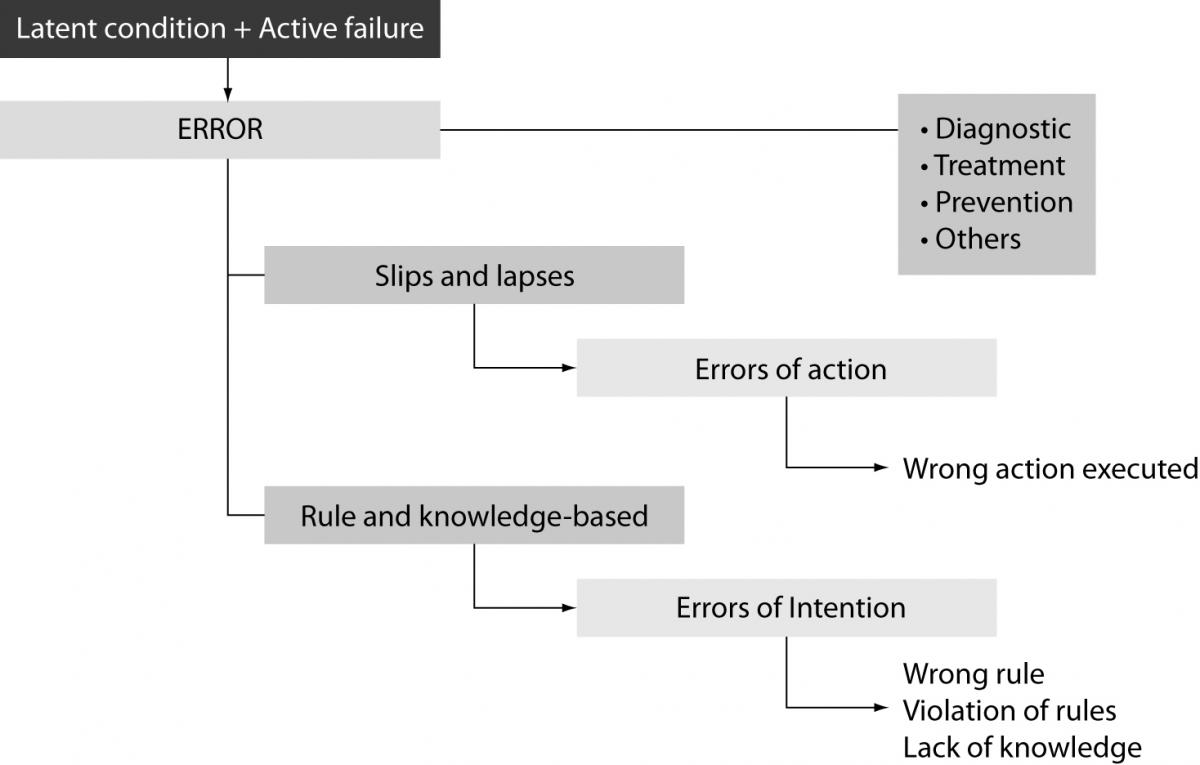

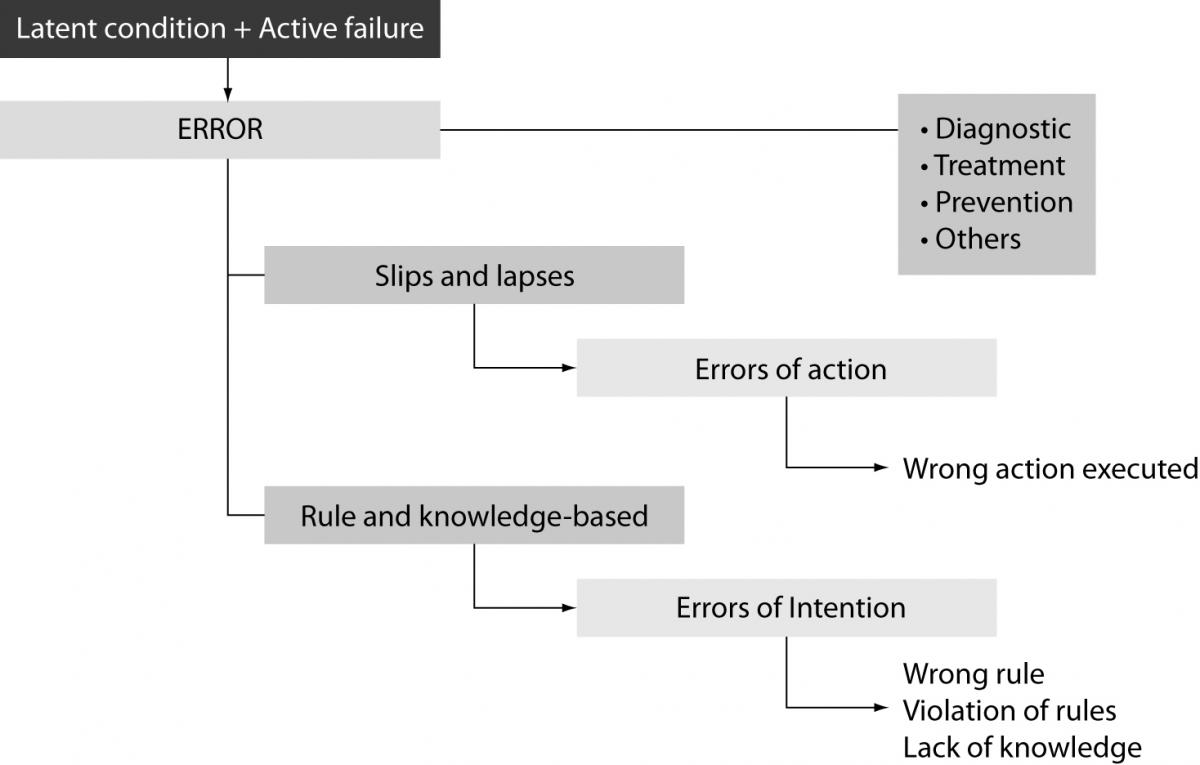

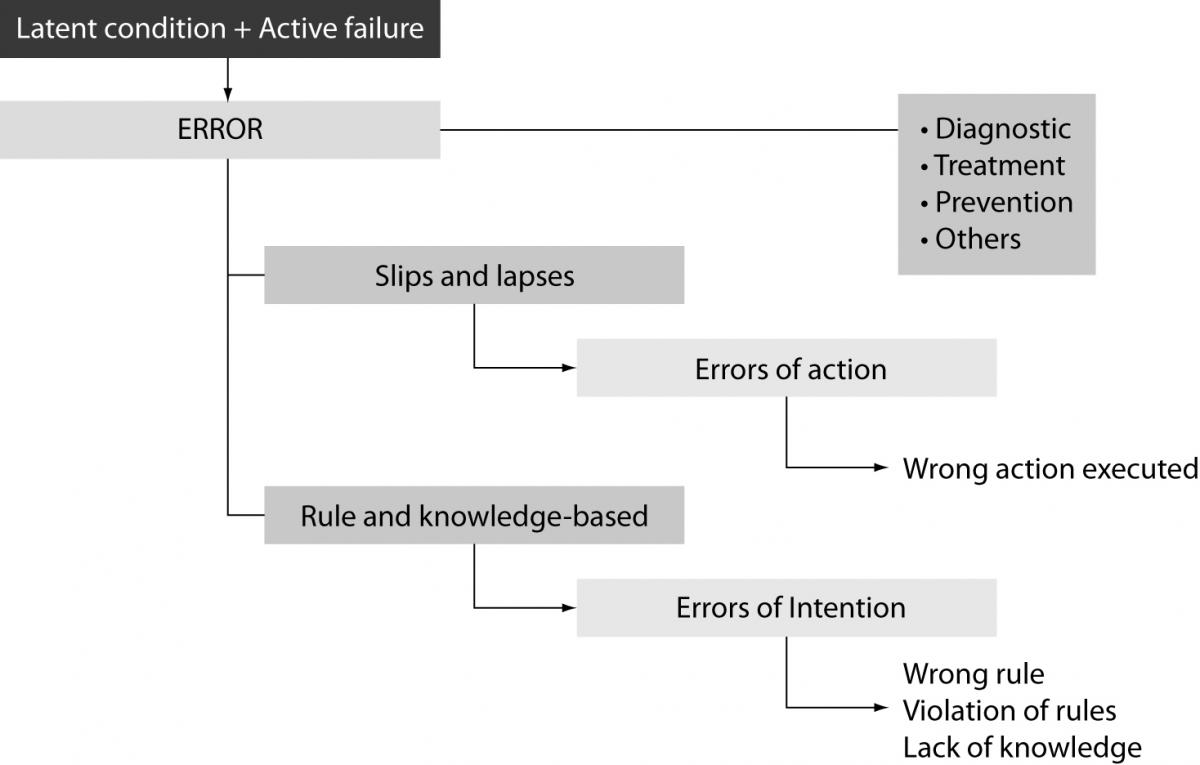

Incidents are traditionally classified in near miss (an incident which did not reach the patient), no harm incident (an incident which reached a patient but no discernable harm resulted) and harmful incident or adverse event (an incident which resulted in harm to a patient). The degree of patient safety incident can be further streamlined in the broad conceptual framework of patient outcome, ranging from no harm (patient is not symptomatic or no symptoms detected and no treatment is required), mild harm (patient is symptomatic, symptoms are mild, loss of function or harm is minimal or intermediate but short term, and no or minimal intervention is required), moderate harm (patient is symptomatic, requiring intervention, an increased length of stay, or causing permanent or long term harm or loss of function), severe harm (patient is symptomatic, requiring life-saving intervention or major surgical/medical intervention, shortening life expectancy or causing major permanent or long term harm or loss of function), and death (which was caused or brought forward in the short term by the safety incident) (13). Most errors results from active failures, that are unsafe acts committed by people who are in direct contact with the system and have a direct and usually short-lived effect on the integrity of the defenses, or latent conditions, that are fundamental vulnerabilities in one or more layers of the system such as system faults, system and process misfit, alarm overload, inadequate maintenance. As such, latent conditions may lie dormant within the system for many years before they combine with active failures and local triggers to create an accident opportunity. Errors can also be classified in skill-based slips and lapses (i.e., errors of action), or rule and knowledge-based mistakes (i.e., errors of intention). In the former case the operators actually knew what to do but did the wrong action/s (e.g., administering the wrong drug, processing an unsuitable specimen), whereas in the latter case they failed to chose the right rule (e.g., requesting an inappropriate diagnostic test), violated rules (e.g., administering the right drug at the wrong time, release laboratory test results while violating quality controls), or did not know what they were doing (e.g., failing to understand the distinction between references and values in a spreadsheet) (Figure 1). Basically, slips and lapses are the easiest to identify and recover from as users can always recognize that they have made an error. Conversely, recovering from rule-based errors is more challenging since this means that the whole system has to understand the process and rules associated with some specific intention. Finally, recovering from knowledge-based errors is very difficult because the system has to know the intentions of the user.

Figure 1. Classification of medical errors.

As other medical areas, laboratory diagnostics is frequently delivered in a pressurized and fast-moving environment, involving a vast array of innovative and complex technologies, so that it can not be considered completely safe. A reliable definition of laboratory errors is that originally provided by Bonini et al. as “a diagnosis that is missed, wrong, or delayed, as detected by some subsequent definitive test or finding”, which has been further acknowledged and adopted by the ISO Technical Report 22367, as “a defect occurring at any part of the laboratory cycle, from ordering tests to reporting, interpreting, and reacting to results” (17).

Diagnostic errors

Although it is difficult to esteem accurately the rate of diagnostic errors in general, it has been reported that the prevalence of laboratory errors can be as high as one every 330–1000 events, 900–2074 patients, and 214–8316 laboratory test results (18). It is therefore surprising to notice that diagnostic errors have been frequently underestimated in the clinical practice over the past decades. This has been attributed to two main reasons. First, it is commonly perceived that diagnostics has pursued a virtuous path, culminating in a substantial reduction of vulnerable steps. Then, diagnostic errors in general might go frequently undetected since they not always translate into a real harm for the patient, or the eventual harm can not be truthfully related to a diagnostic error. While laboratory errors are traditionally identified with analytical problems and uncertainty of measurements, an extensive scientific literature now attests that most errors (up to 80–90%) seem to occur from the extra-analytical phase of the total testing process (19–24). Even more interestingly, patient care involving non-laboratory personnel seems to account for the majority of errors, representing 95.2% of these mistakes. Data from the most representative studies on this topic, show that preanalytical errors (e.g., insufficient samples, poor sample conditions, inappropriate sample handling and transport, incorrect identification, incorrect sample) are the first cause of variability in laboratory testing, accounting for more than half (46–68%) of all laboratory errors, whereas analytical (e.g., equipment malfunction, release of results despite poor quality controls, analytical interferences) and postanalytical errors (e.g., inappropriate reporting or analysis, improper data entry, high turn around times, failure to notify critical values) represent respectively 7–13% and 18–47% of all mistakes in the total testing process. Although it is rarely clear whether a diagnostic error might still impact negatively on patient outcome inasmuch as spurious or absurd results are usually ignored because easily recognized, under critical conditions there is indeed a high chance that near-misses might translate into serious incidents (5 to 20% of the cases) such as the use of redundant procedures (e.g., blood grouping, blood safety testing, constitutional tests in general), repeat testing, misdiagnosis and thereby wrong clinical decision making.

In spite of this apparent underestimation of diagnostic errors, laboratory medicine has been foremost in pursuing the issue of patient safety. More than 80 years ago, the American Society of Clinical Pathologists (ASCP), the herald of the current College of American Pathologist (CAP), settled a voluntary proficiency testing program focused on analytical quality (25). In the early 1990s, the CAP initiated several Q-Probes studies and Q-Tracks investigations to collect and analyze results on a variety of performance measures, including magnitude and significance of errors, strategies for error reduction, and willingness to implement each of these performance measures (26). As such, laboratory professionals, regulation bodies together with the diagnostics industry have been focusing for decades for improving the analytical quality, by establishment of Internal Quality Controls (IQCs) and External Quality Assessment (EQA) schemes (19, 27-28).

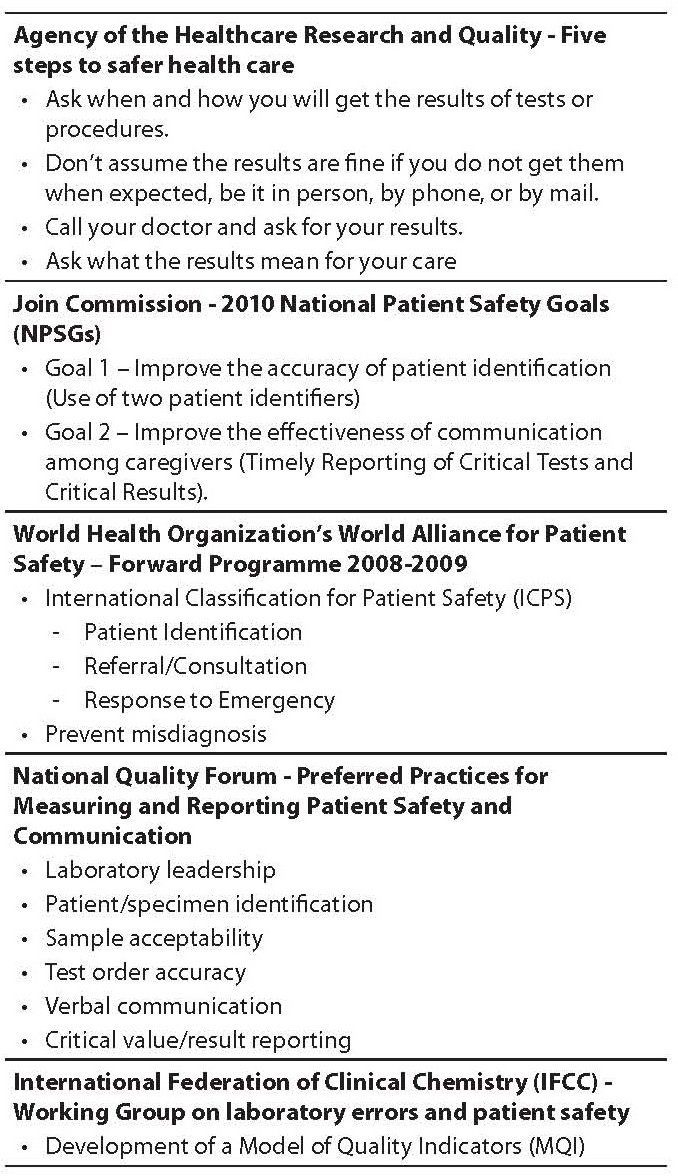

Several national and international bodies and organizations, not necessarily linked to the field of laboratory medicine, still include diagnostic errors among the most preventable causes of harm for the patients. The Patient Fact Sheet issued by the AHRQ lists “Five steps to safer health care” that are:

1. ask questions if you have doubts or concerns;

2. keep and bring a list of all the medicines you take;

3. get the results of any test or procedure;

4. talk to your doctor about which hospital is best for your health needs; and

5. make sure you understand what will happen if you need surgery.

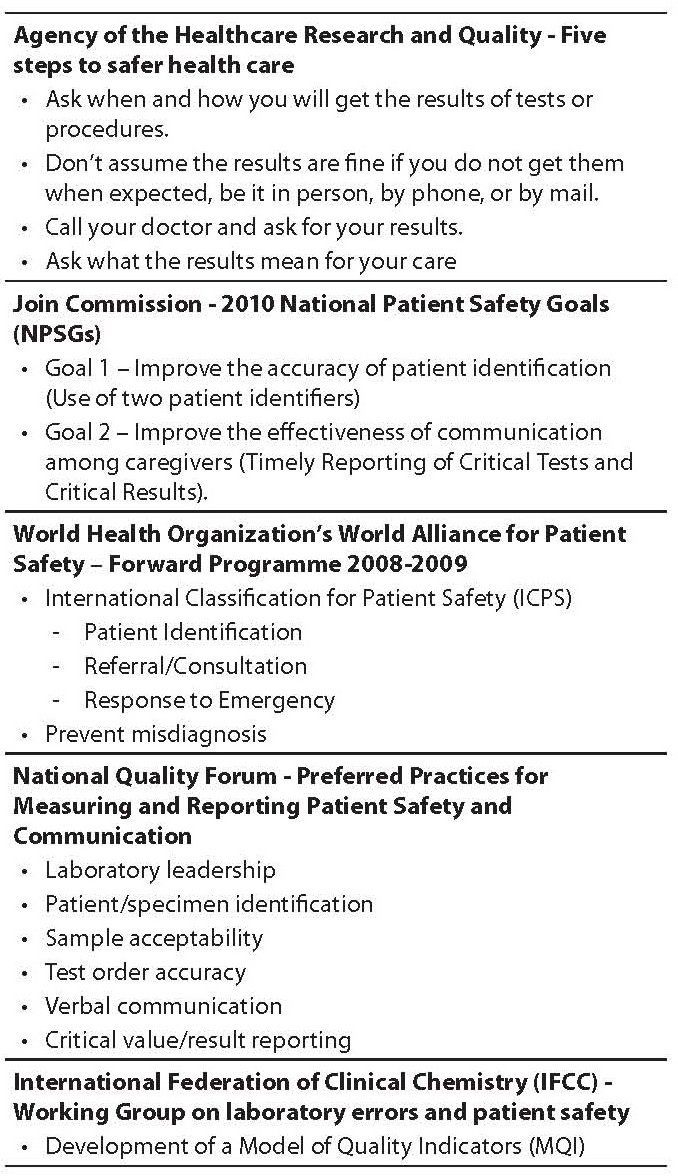

As regards the third item, which actually refers to diagnostic errors, it is clearly specified to “ask when and how you will get the results of tests or procedures. Don’t assume the results are fine if you do not get them when expected, be it in person, by phone, or by mail. Call your doctor and ask for your results. Ask what the results mean for your care” (29). The 2010 National Patient Safety Goals (NPSGs) issued by the Join Commission still includes several items targeting laboratory diagnostics, which are:

1. Goal 1 – Improve the accuracy of patient identification (Use of two patient identifiers),

2. Goal 2 – Improve the effectiveness of communication among caregivers (Timely Reporting of Critical Tests and Critical Results) (30).

In the Forward Programme 2008–2009 issued by the WHO’s World Alliance for Patient Safety, misdiagnosis is also included within the priority areas of research for developed countries (7). Alongside this aim, the National Quality Forum (NQF, supported by the Centers for Disease Control), has recently issued a document entitled “Preferred Practices for Measuring and Reporting Patient Safety and Communication in Laboratory Medicine: A Consensus Report”, where six preferred practices have been endorsed as national voluntary consensus standards to drive quality improvement within the pre- and postanalytical phases of the total testing process (laboratory leadership, patient/specimen identification, sample acceptability, test order accuracy, verbal communication, critical value/result reporting) (31). Interestingly, compliance with these recommendations is not mandatory, since they are mainly aimed at improving both patient safety and communication of laboratory information with stakeholders (Table 2).

Table 2. Major claims on patient safety in laboratory diagnostics.

Incident reporting in medicine and laboratory diagnostics

The one thing we have learned well is that it almost impossible to have safety where transparency is not assured. In 1994, Lucian L. Leape affirmed that medical errors were not being reported, an assertion that is actually supported by reliable esteems attesting that as few as 5% and no more than 20% of iatrogenic events are ever reported (5). Errors in healthcare can be identified by several mechanisms. Historically, medical errors were revealed retrospectively through morbidity and mortality committees, malpractice claims data and retrospective chart review to quantify adverse event rates. Basically, the concept of incident recovery, which is derived from industrial science and error theory, is of particular importance for learning from patient safety since it successfully allows developing reporting systems for serious accidents and important “near misses” (32). It is inherently a meaningful process by which a contributing factor and/or hazard is identified, acknowledged and addressed, thereby preventing a hazard to develop into an incident. Event reporting is also defined as the primary means through which ADRs and other risks can be identified. The leading purposes of event reporting are to improve the management of an individual patient, identify and correct systems failures, prevent recurrent events, aid in creating a database for risk management and quality improvement purposes, assist in providing a safe environment for patient care, provide a record of the event, and obtain immediate medical advice and legal counsel. As yet, the IOM has recommended two types of reports, that are mandatory reports for the small fraction of events resulting in death or serious harm to patients, and voluntary reports focusing on errors that result in minor or temporary harm or near-misses.

In the U.K., the National Patient Safety Agency encourages voluntary reporting of healthcare errors, and considers several specific instances known as “Confidential Enquiries” for which investigation is routinely initiated (i.e., maternal or infant deaths,childhood deaths to age 16, deaths in persons with mental illness, and perioperative and unexpected medical deaths) (33). Medical records and questionnaires are requested from the involved clinician, and participation has been predictably high, since individual details are confidential. In 1995, hospital-based surveillance was mandated by the U.S. Joint Commission on the Accreditation of Healthcare Organizations (JCAHO) because of a perception that incidents resulting in harm were occurring frequently. As one component of its Sentinel Event Policy, the JCAHO created a Sentinel Event Database which accepts voluntary reports of sentinel events from member institutions, patients, families, and the press. In 2005, the U.S. Congress passed the long-debated Patient Safety and Quality Improvement Act, establishing a federal reporting database. Hospitals reports of serious patient harm are thus voluntary, collected by patient safety organizations under contract to analyze errors and recommend improvements. Reports remain however confidential, and they cannot be used in liability cases (34). An additional example of national incident reporting system is the Australian Incident Monitoring Study (AIMS), which works under the auspices of the Australian Patient Safety Foundation (35). Investigators have created an anonymous and voluntary near miss and adverse event reporting system for anesthetists based on a form that has been distributed to participants, and which contains instructions, definitions, space for narrative of the event, and structured sections to record the anesthesia and procedure, demographics about the patient and anesthetist, and what, when, why, where, and how the event occurred. Several other examples of incident reporting are running throughout Europe, though mostly heterogeneous in their path, since the localnational system typology may include sentinel event reporting (which is often mandatory by law), specific clinical domain reports (which are often voluntary) and system-wide, all-inclusive reports (which can be either mandatory or voluntary). It is inherently clear however that unless a European body will be established to put forward some sort of standardization or harmonization, most national efforts will remain isolate, not allowing transferability of results and practices, as well as making benchmark analysis almost impossible.

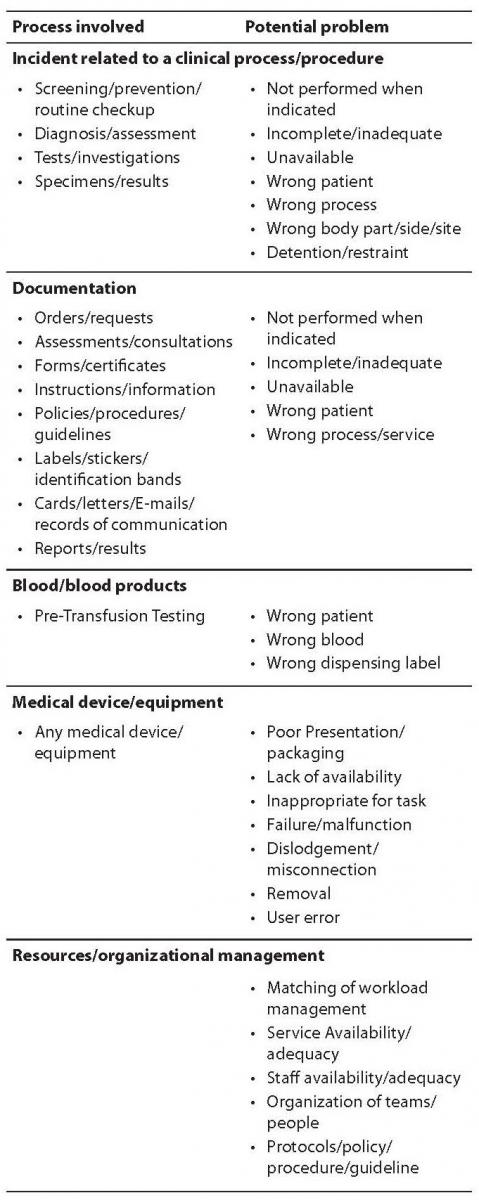

Whilst major focus has been placed on incident reporting for several medical conditions, lesser efforts have been devoted on translating this noteworthy practice into laboratory diagnostics. The laboratory professionals are however patient fiduciaries and thereby responsible for every type of problem involving a serious harm for the patient. Whereas major efforts have been placed to monitor the state of the art in the preanalytical phase and provide reliable solutions in some countries such as Croatia (36) and Italy (37,38), it is surprising that formal programs of incident reporting have not been so pervasive in laboratory diagnostics as in other healthcare settings. This calls for the urgent need to establish a reliable policy of errors recording, possibly through informatics aids (39), and settle universally agreed “laboratory sentinel events” throughout the total testing process, which would allow gaining important information about serious incidents and holding both providers and stakeholders accountable for patient safety. Some of these sentinel events have already been identified, including inappropriate test requests for critical pathologies and patient misidentification (preanalytical phase), use of wrong assays, severe analytical errors, critical tests performed on unsuitable samples and release of laboratory results in spite of poor quality controls (analytical phase), failure to alert critical values and wrong report destination (postanalytical phase) (40,41). The Drafting Group of WHO’s International Classification for Patient Safety (ICPS) has also developed a conceptual framework which might also be suitable for diagnostics errors, and consists of 10 high levels that include incident type, patient outcomes, patient characteristics, incident characteristics, contributing factors/hazards, organizational outcomes, detection, mitigating factors, ameliorating actions, actions taken to reduce risk. Among these, some items can be used for identifying and reporting problems in laboratory diagnostics, as listed in Table 3.

Table 3. Examples of incident reporting in laboratory diagnostics: potential indicators from WHO's International Classification for Patient Safety (ICPS).

Solutions

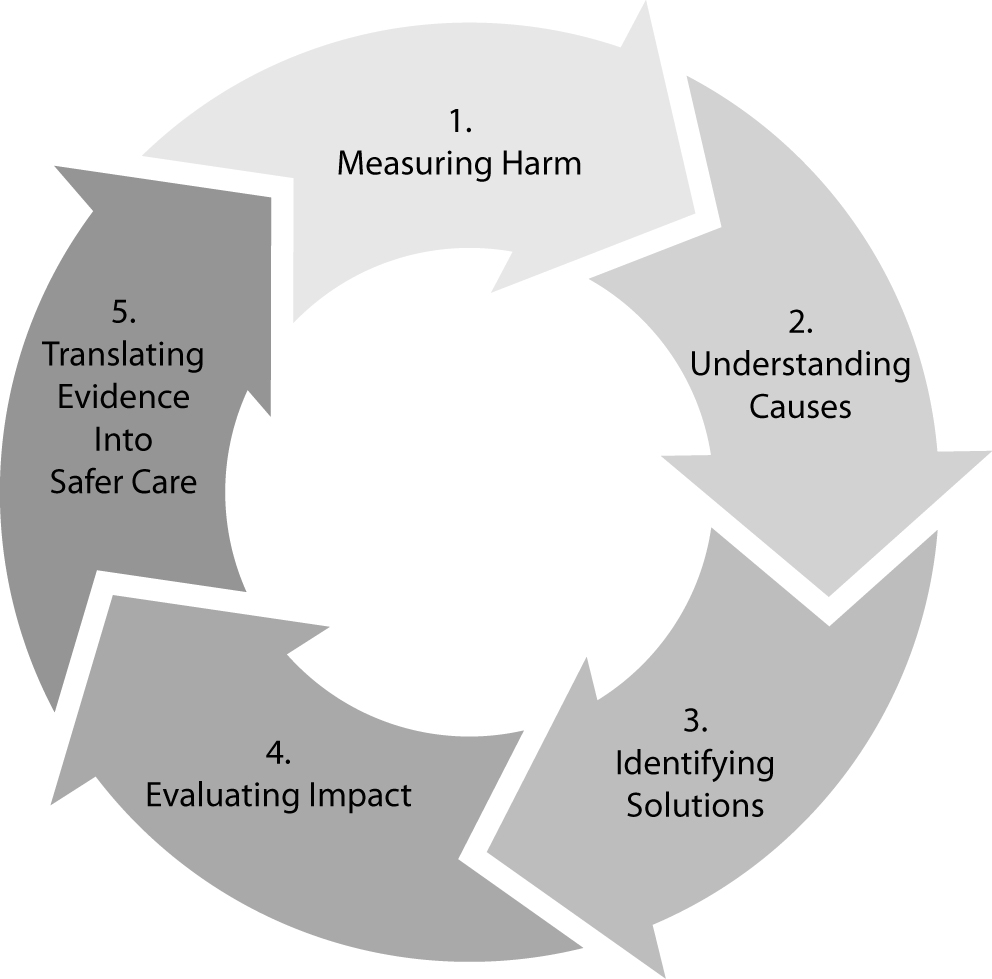

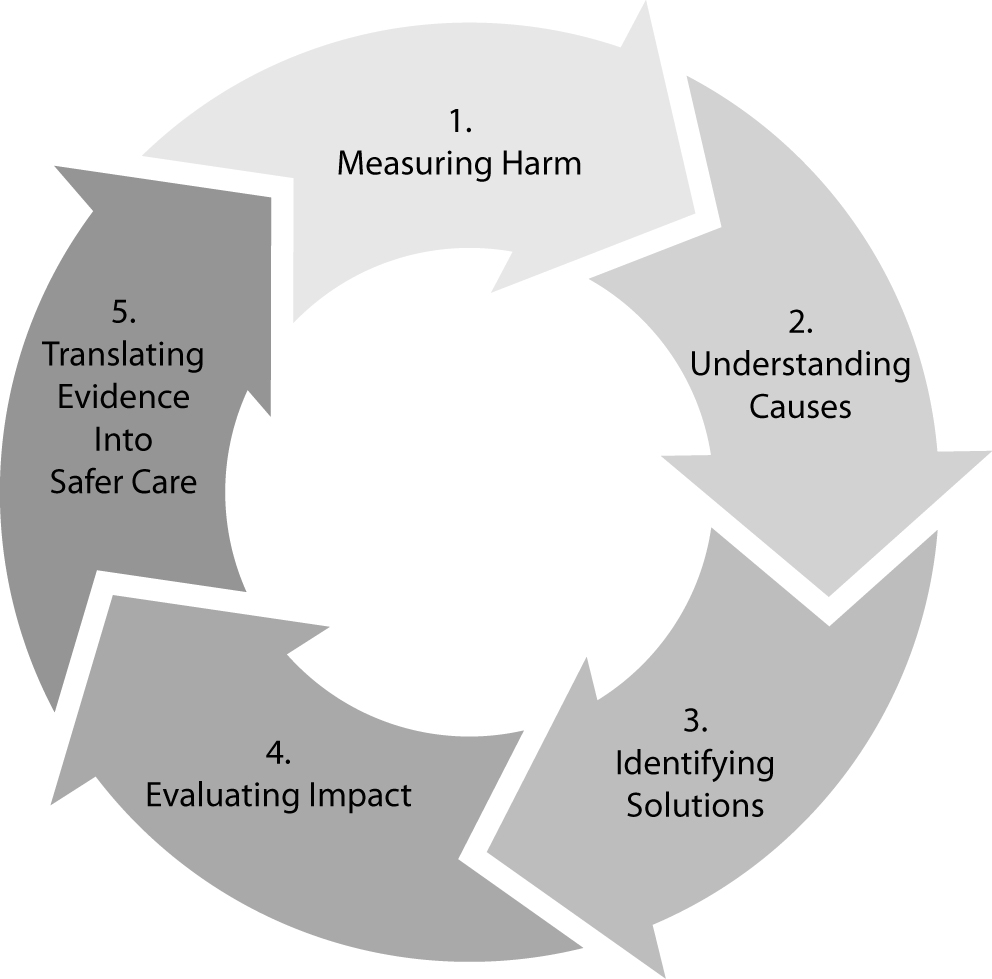

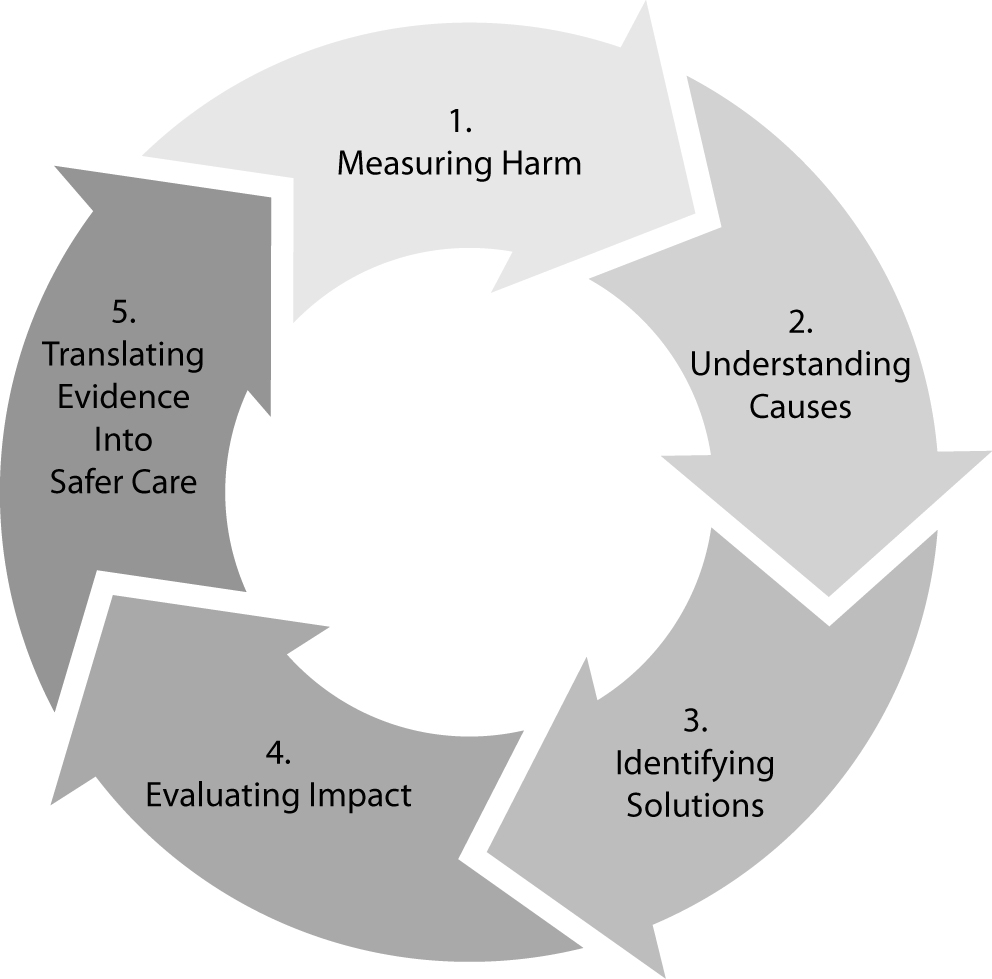

In agreement with the foremost model of James Reason, the most reliable approach to enhance patient safety in laboratory diagnostics, and more generally in healthcare, encompasses a multifaceted approach based on predicting eventual accidents, reducing the number of latent conditions in the different layers of the system (plug the holes), increasing and diversifying the strength of the defenses, so that probability of accident trajectories and active faults is minimized (42,43). The final results is a kind of “vicious circle”, where harm is being measured, causes understood, solutions identified, impact and translation of evidence into safer care finally evaluated to get back to the starting point of the loop (Figure 2). In this context, risk management, clinical governance and root cause analysis (RCA) all play a prominent role. The Failure Mode and Effect Analysis (FMEA) has been broadly cited as a reliable approach to risk management. It is a systematic process for identifying potential process failures earlier before they occur, with the aim to eliminate them or minimize the relative risk. This model of risk management was originally developed in the 1940s by the U.S. Army, and further developed by the aerospace and automobile industries. The US department of Veteran Affairs (VA) National Center for Patient Safety developed a simplified version of FMEA for being applied to healthcare, called Healthcare FMEA (HFMEA) (44). Considering that all human errors, including medical errors, always have a preceding cause, RCA is an additional valuable aid, since it is based on a retrospective analytical approach, which has found broad applications to investigate major industrial accidents (16). Basically, a root cause is the most basic casual factor which, when corrected or removed, might prevent recurrence of an adverse and unwelcome event (e.g., a medical error). As such, RCA focuses on identifying the latent conditions that underlie variation in medical performance and, if applicable, developing recommendations for improvements to decrease the likelihood of a similar incident in the future.

Figure 2. The virtuous path of enhancing safety in healthcare

As for any other type of medical error, development and widespread implementation of a total quality management system is the most effective strategy to minimize uncertainty in laboratory diagnostics. Pragmatically, this can be achieved using three complementary actions, that are preventing adverse events (error prevention), making them visible (error detection), and mitigating their adverse consequences when they occur (error management). Owing to the volume and complexity of testing, a large number of errors still occur in laboratory diagnostics, especially in the extra-analytical phases of testing. In particular, the high frequency of errors still attributable to processes external to the laboratory requires additional efforts for the governance of this neglected phase of the total testing process (23,42-43). A primary solution is the adoption of uniform reporting schemes for error events based on reliable quality indicators covering both the analytical and extra-analytical phases of testing (45). As such, the division of Education and Management (EMD) of the International Federation of Clinical Chemistry and Laboratory Medicine (IFCC) has established a Working Group named “Laboratory Errors and Patient Safety (WG-LEPS)”, with the specific mission to promote and encourage investigations into errors in laboratory medicine, gather available data on this issue, and establish strategies and paths for improving patient safety (46). The anticipated outcome is the creation of reliable Model of Quality Indicators (MQI), which would grant major improvements of laboratory performance as well as identify suitable actions to undertake when dealing with critical events throughout the total testing process. In strict analogy with the analytical phase, the next step to improve the quality of the total testing process therefore foresees development and introduction of ICQ and EQA programs embracing the total testing process, downstream and upstream the analytical phase. There are already some noteworthy examples on how this can be translated into practice, such as the forthcoming introduction of a national EQA scheme in Croatia for the preanalytical phase (24), or the development of a reliable program of quality control of the hemolysis index among different laboratories (47,48).

Conclusions

Patient safety is the healthcare discipline that emphasizes the reporting, analysis, and prevention of medical error that often lead to adverse healthcare events. Besides carrying serious harms to the patient health, medical errors translate into a huge amount of money wiped out of the national and international economy. Significant progress has been made since the release of “To Err is Human”. Basically, what has changed is the willingness to recognize the challenge and not argue about the numbers, but appreciate that care must be safe always and everywhere for each patient. This has led to remarkable changes in the culture of healthcare organizations, so that medical errors can no longer be seen as inevitable, but as something that can be actively streamlined and prevented. At this point in time, reliable patient-centered initiatives should be prompted or reinforced to make the healthcare arena a safety place for everybody. Attention to organizational issues of structure, strategy and education – that is establishing and disseminating a “real” culture of safety – are foremost standpoints. Over the past century laboratory medicine has been forerunner in pursuing the issue of patient safety, and several recent endeavors confirm that we are probably on the right way to succeed for delivering safe, high-quality care.Thousands of analysts and advocates worldwide are looking for ways to make the health care system work more efficiently. The solution is apparently simple: healthcare should be delivered in a more efficient and affordable manner, with better quality and clinical outcomes. Significant improvements thereby require an overhaul of the delivery system which can’t be done without sizable investments from Governments and national healthcare systems. Almost everything in laboratory diagnostics and more generally in healthcare is being developed in response to market demand, but a lot more might be done for patient safety with more financial incentives. We all know that the money devolved for quality are those best spent, and always associated with a paradoxical but tangible reduction of costs.

Notes

Potential conflict of interest

None declared.

References

1. Berwick DM, Leape LL. Reducing errors in medicine. BMJ 1999;319:136-7.

2. LazarouJ, PomeranzBH, CoreyPN. Incidence of adverse drug reactions in hospitalized patients: a meta-analysis of prospective studies. JAMA 1998;279:1200-5.

3. Null G, Dean C, Feldman M, Rasio D, Smith D. Death by medicine. Life Extension Magazine. August 2006.

4. Kohn KT, Corrigan JM, Donaldson MS. To Err Is Human: Building a Safer Health System. Washington, DC: National Academy Press; 1999.

5. Leape LL. Error in medicine. JAMA 1994;272:1851-7.

6. Institute of Medicine. Crossing the Quality Chasm: A New Health Care System for the 21st Century. Washington, DC: National Academy Press, 2001.

9. Larkin H. 10 years, 5 voices, 1 challenge. To Err is Human jump-started a movement to improve patient safety. How far have we come? Where do we go from here? Hosp Health Netw 2009;83:24-8

11. Wise J. NHS operating framework for 2010-11 places emphasis on quality. BMJ 2009;339:b5573.

12. Lippi G, Guidi GC. Laboratory errors and Medicare’s new reimbursement rule. LabMedicine 2008;39:5-6.

14. National Audit Office. Department of Health. A Safer Place for Patients: Learning to improve patient safety. London: Comptroller and Auditor General (HC 456 Session 2005-2006).

15. Wu AW, Cavanaugh TA, McPhee SJ, Lo B, Micco GP. To tell the truth: Ethical and practical issues in disclosing medical mistakes to patients. J Gen Intern Med 1997;12:770–5.

16. Reason JT. Human Error. New York: Cambridge Univ Press 1990.

17. ISO/PDTS 22367. Medical laboratories: reducing error through risk management and continual improvement: complementary element.

18. Plebani M. Errors in clinical laboratories or error I Laboratory medicine? Clin Chem Lab Med 2006;44:750-9.

19. Plebani M, Lippi G. To err is human. To misdiagnose might be deadly. Clin Biochem 2010;43:1-3.

20. Plebani M. Laboratory errors: How to improve pre- and post-analytical phases? Biochem Med 2007;17:5-9.

21. Plebani M. Exploring the iceberg of errors in laboratory medicine. Clin Chim Acta 2009;404:16-23.

22. Lippi G, Guidi GC, Mattiuzzi C, Plebani M. Preanalytical variability: the dark side of the moon in laboratory testing. Clin Chem Lab Med 2006;44:358-65.

23. Favaloro EJ, Lippi G, Adcock DM. Preanalytical and postanalytical variables: the leading causes of diagnostic error in hemostasis? Semin Thromb Hemost 2008;34:612-34.

24. Lippi G, Simundic AM. Total quality in laboratory diagnostics. It’s time to think outside the box. Biochem Med 2010;20:5-8.

25. Hilborne LH, Lubin IM, Scheuner MT. The beginning of the second decade of the era of patient safety: implications and roles for the clinical laboratory and laboratory professionals. Clin Chim Acta 2009;404:24-7.

26. Raab SS. Improving patient safety through quality assurance. Arch Pathol Lab Med 2006;130:633-7.

27. Burnett D, Ceriotti F, Cooper G, Parvin C, Plebani M, Westgard J. Collective opinion paper on findings of the 2009 convocation of experts on quality control. Clin Chem Lab Med 2010;48:41-52.

28. Westgard JO. Managing quality vs. measuring uncertainty in the medical laboratory. Clin Chem Lab Med 2010;48:31-40.

32. van der Schaaf TW, Clarke JR, Ch 7 – Near Miss Analysis. In Aspden P, Corrigan J, Wolcott J, Erickson S, (eds). Institute of Medicine, Committee on Data Standards for Patient Safety, Board on Health Care Services. Patient Safety: Achieving a New Standard for Care. Washington DC: National Academies of Sciences, 2004

34. US Agency for Healthcare Research & Quality: Beyond State Reporting: Medical Errors and Patient Safety Issues. Available at:

http://www.ahrq.gov/qual/errorsix.htm. Accessed March 23, 2008.

35 Webb R, Currie M, Morgan C, Williamson J, Mackay P, Russell W, et al. The Australian Incident Monitoring Study: an analysis of 2000 incident reports. Anaesth Intens Care 1993;21:520-8.

36. Bilic-Zulle L, Simundic AM, Supak Smolcic V, Nikolac N, Honovic L. Self reported routines and procedures for the extra-analytical phase of laboratory practice in Croatia – cross-sectional survey study. Biochem Med 2010;20:64-74.

37. Lippi G, Montagnana M, Giavarina D. National survey on the pre-analytical variability in a representative cohort of Italian laboratories. Clin Chem Lab Med 2006:44;1491-4.

38. Lippi G, Banfi G, Buttarello M, Ceriotti F, Daves M, Dolci A, et al. Recommendations for detection and management of unsuitable samples in clinical laboratories. Clin Chem Lab Med 2007;45:728-36.

39. Lippi G, Bonelli P, Rossi R, Bardi M, Aloe R, Caleffi E, Bonilauri E. Development of a preanalytical errors recording software.Biochem Med 2010;20:90-5.

40. Lippi G, Mattiuzzi C, Plebani M. Event reporting in laboratory medicine. Is there something we are missing? MLO Med Lab Obs 2009;41:23.

41. Lippi G, Plebani M. The importance of incident reporting in laboratory diagnostics. Scand J Clin Lab Invest 2009; 69:811-3.

42. Lippi G, Guidi GC. Risk management in the preanalytical phase of laboratory testing. Clin Chem Lab Med 2007;45: 720-7.

43. Lippi G. Governance of preanalytical variability: travelling the right path to the bright side of the moon? Clin Chim Acta 2009;404:32-6.

44. DeRosier J, Stalhandske E, Bagian JP, Nudell T. Using health care Failure Mode and Effect Analysis: the VA National Center for Patient Safety’s prospective risk analysis system. Jt Comm J Qual Improv 2002;5:248-67.

45. Simundic AM, Topic E. Quality indicators. Biochem Med 2008;18:311-9.

46. Sciacovelli L, Plebani M. The IFCC Working Group on laboratory errors and patient safety. Clin Chim Acta 2009;404:79-85.

47. Lippi G, Luca Salvagno G, Blanckaert N, Giavarina D, Green S, et al. Multicenter evaluation of the hemolysis index in automated clinical chemistry systems. Clin Chem Lab Med 2009;47:934-9.

48. Plebani M, Lippi G. Hemolysis index: quality indicator or criterion for sample rejection? Clin Chem Lab Med 2009;

47:899-902.